Research

Sloppy models

Many scientific models are sloppy, meaning they contain numerous parameters that have little influence on the model’s predictions. In such models, a few parameter combinations are highly sensitive, while many others can vary widely with minimal effect. Recognizing and accounting for sloppiness is essential for understanding model behavior and for guiding uncertainty quantification and experimental design.

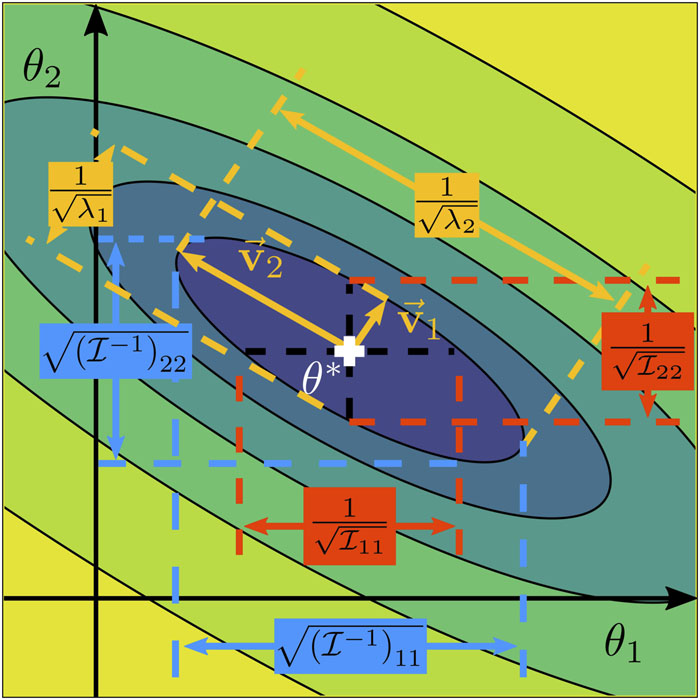

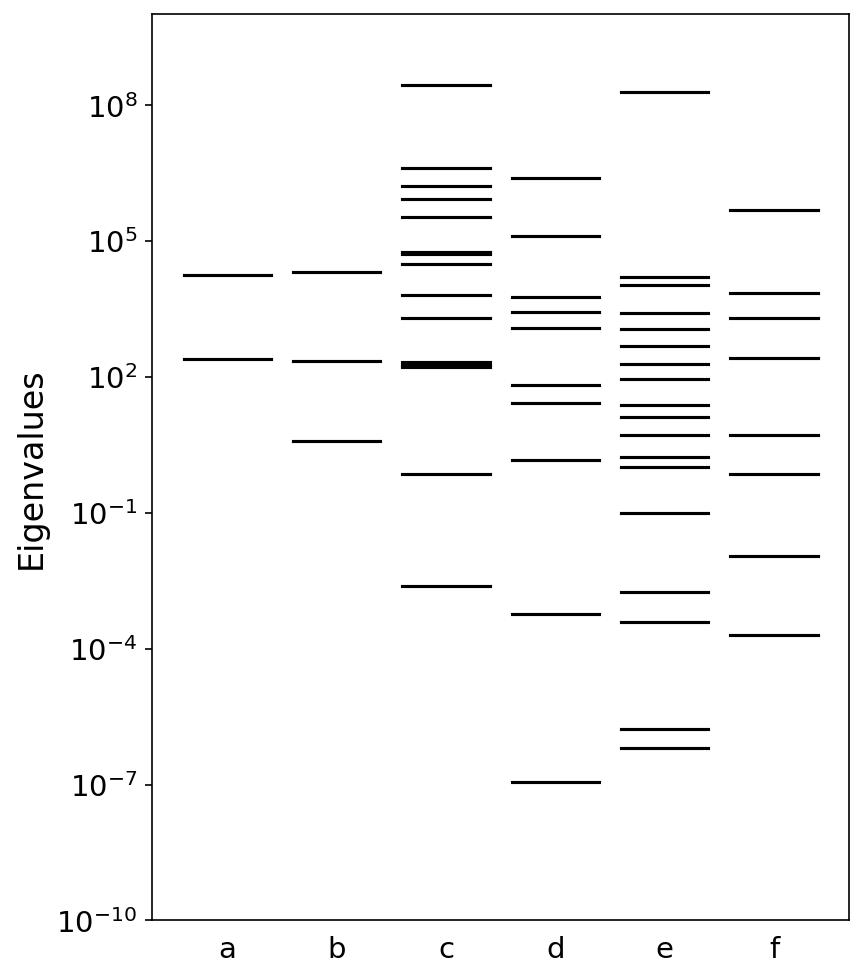

A key tool for analyzing sloppy models is the Fisher Information Matrix (FIM), which quantifies how much information the data contain about model parameters. In sloppy models, the eigenvalues of the FIM span several orders of magnitude, reflecting the wide variation in parameter sensitivities. The eigenvectors indicate which combinations of parameters can be tightly constrained by data and which remain effectively unconstrained, providing insight into the structure of uncertainty in the model.

Uncertainty quantification

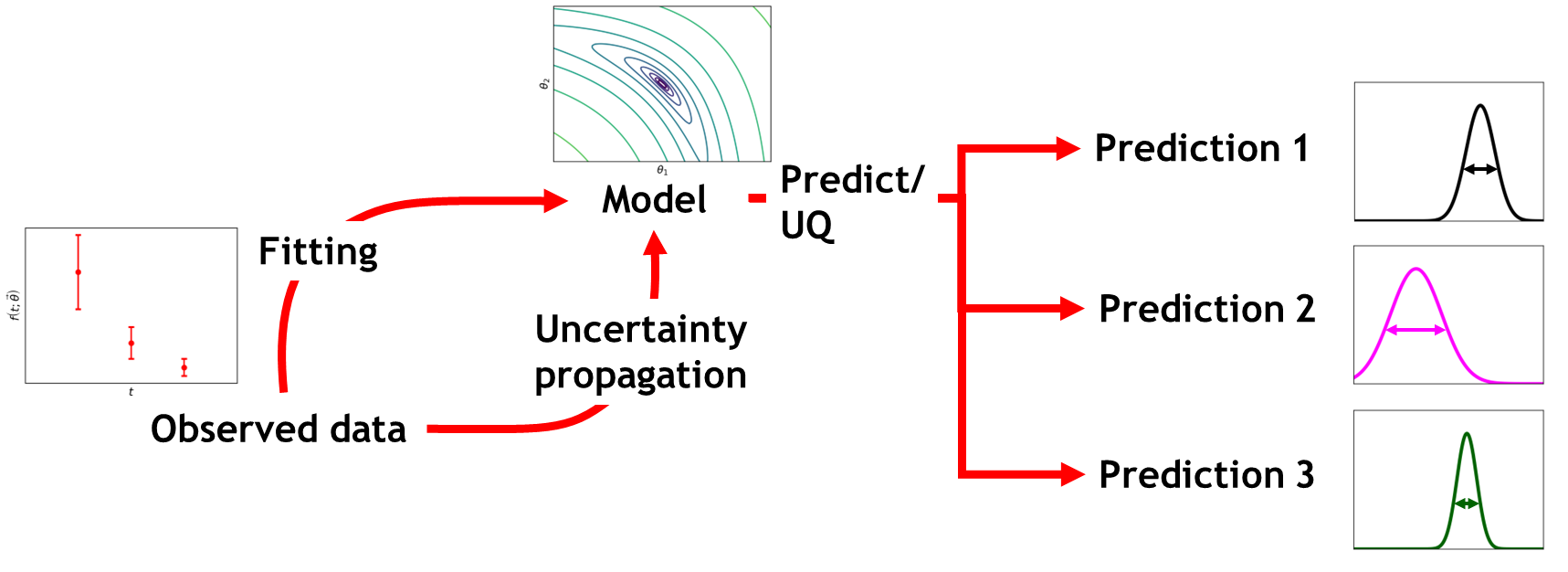

Model performance is often evaluated by its prediction accuracy against some ground truth. However, ground truth data is not always readily available, and acquiring it can be costly, time-consuming, or even infeasible. In such cases, prediction precision, measured by the model’s uncertainty, can serve as a surrogate for reliability.

Uncertainty quantification (UQ) studies how uncertainties inherent in models, data, and assumptions propagate through the modeling process. This includes uncertainty in parameter estimation, model structure, and input data, as well as their impact on final predictions. By formally analyzing these uncertainties, UQ provides a principled way to assess how much confidence can be placed in predictions, identify critical sources of variability, and guide both model improvement and experimental design. Furthermore, to provide meaningful guidance alongside experimental or observational data, predictions should be accompanied not only by an estimate of prediction error, but also by a quantified measure of predictive uncertainty.

Modern machine learning models are increasingly applied in domains that demand high reliability, including materials science, medicine, and autonomous systems. In these contexts, accurate predictions alone are not enough; understanding their uncertainty is critical for making safe, robust, and scientifically defensible decisions. Without proper UQ, model predictions may appear confident even in regions where data are scarce or parameters are poorly constrained, leading to overconfident or misleading inferences.

Information-matching approach for active learning

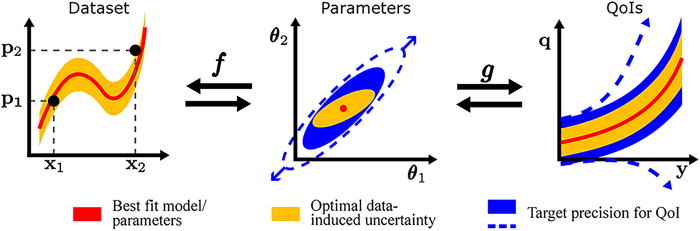

Even in the era of big data, high-quality scientific data remains expensive. Active learning (AL) aims to address this challenge by selecting experiments that are maximally informative. Most AL strategies seek to minimize global uncertainty — either in model parameters or in predictions. In practice, however, this objective can be computationally and scientifically inefficient. Many scientific models are ill-conditioned; parameters are often unidentifiable or sloppy; and large regions of parameter space have negligible influence on the quantities that ultimately drive decisions. As a result, substantial effort may be spent reducing uncertainty in directions that do not meaningfully affect downstream predictions.

What if the objective were changed?

Instead of minimizing uncertainty everywhere, we propose requiring only sufficient uncertainty reduction in specific quantities of interest (QoIs). This shift reframes active learning around decision-relevant information rather than global parameter precision. Together with Mark Transtrum, I developed an information-matching framework for active learning based on this principle. Rather than repeatedly solving ill-posed inverse problems, the method directly aligns experimental information with the sensitivities of downstream QoIs.

This perspective offers several advantages:

- Avoids unnecessary parameter inversions

- Focuses only on parameter combinations that influence decision-relevant predictions

- Naturally accounts for model sloppiness and identifiability

- Leads to a convex and scalable optimization formulation

By explicitly linking experimental design to the geometry of parameter space and to the structure of quantities of interest, information matching provides a principled and computationally efficient approach to data acquisition under uncertainty.